How to Build a Creative Testing Framework That Scales

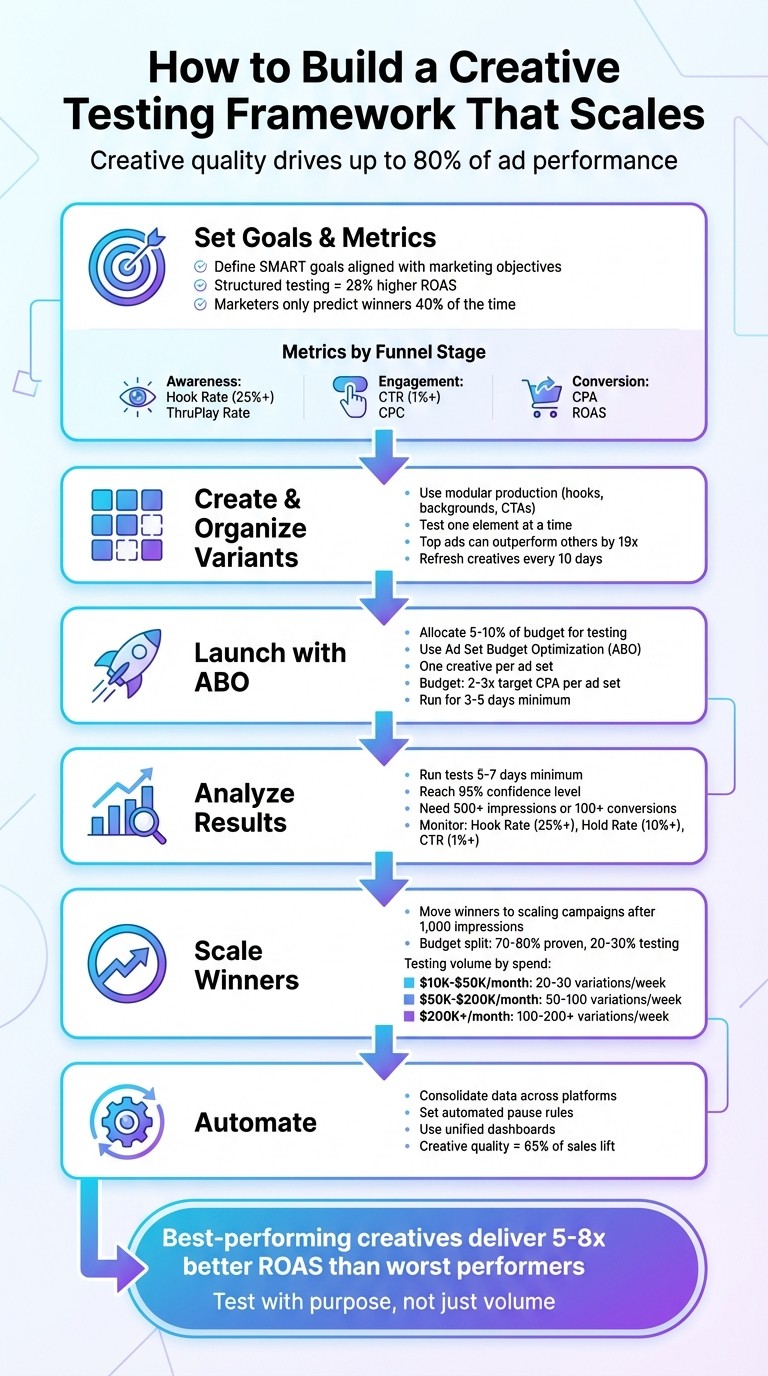

Creative testing is the backbone of successful ad campaigns, especially with platforms like Meta, Google, and TikTok prioritizing diverse content over traditional targeting. With ad performance being driven up to 80% by content quality, having a structured testing framework is non-negotiable. Here's the bottom line:

Set clear goals: Align testing metrics (like CPA, ROAS, or Hook Rate) with your marketing objectives.

Organize systematically: Use modular production to create variations efficiently and test one element at a time.

Launch with precision: Allocate 5–10% of your budget for testing, using Ad Set Budget Optimization (ABO) to ensure fairness.

Analyze thoroughly: Run tests for at least 7 days, focusing on metrics like Hook Rate (25%+), CTR (1%+), and ROAS.

Scale winners: Transition top-performing ads to scaling campaigns and refresh creatives every 10 days to avoid fatigue.

Tools like Bigeye’s EyeSight simplify this process by consolidating data across platforms, enabling faster, data-driven decisions. To stay competitive, test 20–200 variations weekly (depending on budget) and automate workflows wherever possible. Success lies in testing with purpose, not volume.

6-Step Creative Testing Framework for Scaling Ad Campaigns

The BEST Ad Creative Testing Methods for Performance Marketers

Step 1: Set Your Goals and Metrics

The first step in creative testing is all about setting up a solid, measurable foundation. Before diving into tests, you need to know exactly what you're measuring. Without clear goals, you risk spending your budget without gaining useful insights. Here's a compelling stat: advertisers with a structured creative testing process see a 28% higher ROAS compared to those who test randomly [4]. Interestingly, marketers only predict top-performing creatives correctly 40% of the time [4].

Your goals should directly tie into your overall marketing objectives. For example, if you're aiming to boost brand awareness, focus on metrics like Hook Rate (3-second video views) and ThruPlay rates. If conversions are your priority, track metrics like Cost Per Acquisition (CPA) and Return on Ad Spend (ROAS) [6][8]. Make sure your goals are SMART - Specific, Measurable, Achievable, Relevant, and Time-bound. For instance, you might aim to "reduce CPA to under $20 within 30 days." This kind of clarity ensures your testing aligns with broader marketing strategies.

Define Your Testing Goals

To get started, align your testing goals with the stages of your marketing funnel and set clear performance benchmarks for each. For awareness campaigns, focus on whether your creative grabs attention - metrics like Hook Rate and ThruPlay rates are key [5][6]. For consideration campaigns, look at Click-Through Rate (CTR) and Cost Per Click (CPC) to gauge interest [8][10]. For conversion-focused campaigns, track CPA and ROAS to see if your creative is driving profitable sales [6][8].

Use your account's historical data to set realistic benchmarks. For example, if your current CPA is $30, it wouldn't be reasonable to expect it to drop to $15 overnight. Instead, aim for $25 within a couple of weeks. Conduct a gap analysis on your current ad performance to pinpoint weaknesses in messaging, formats, or audience engagement [10]. This analysis will show you exactly where to focus your efforts.

Choose Your Performance Metrics

Once your goals are defined, pick metrics that directly reflect those objectives. Here's a key insight: creative quality accounts for 47% of ad performance variability [4]. The best-performing creative in a test often delivers 5x to 8x better ROAS than the worst [4]. Choosing the right metrics can speed up the process of identifying winning creatives.

Here’s a quick guide to matching metrics with your funnel stage:

Marketing Objective | Primary Testing Metric | Secondary/Supporting Metric |

|---|---|---|

Brand Awareness | Hook Rate (3s View / Imp) | ThruPlay Rate, CPM |

Engagement | Click-Through Rate (CTR) | Hold Rate (50% Video View), CPC |

Direct Response | Cost Per Acquisition (CPA) | Conversion Rate (CVR), ROAS |

Retention/LTV | Return on Ad Spend (ROAS) | Average Order Value (AOV), Add to Cart % |

Tools like Bigeye's EyeSight platform can simplify this process. EyeSight consolidates metrics from platforms like Meta Ads Manager, Google Ads, and TikTok into one dashboard. This unified view makes it easier to identify patterns, decide which creatives to scale, and determine which ones to cut.

Before launching your test, set clear thresholds. For example, pause an ad if its CTR falls below 0.5% after three days, or declare a winner if its ROAS exceeds 3x within seven days [4]. However, don’t rush to conclusions - let your ads run for at least 1,000 impressions and seven days to ensure the data is statistically reliable [4][6]. This way, you avoid prematurely cutting a potential winner or scaling an outlier.

Step 2: Create and Organize Creative Variants

Once your goals and metrics are clear, it’s time to develop creative assets for testing. Creative quality is a game-changer - over half of your campaign's performance depends on it. In fact, a well-designed ad can outperform another by up to 19 times just by using a better approach [8]. That’s why testing multiple creative angles is so important.

Build Creative Variants Systematically

Start by using modular production. Break your creative into reusable components - like hooks, backgrounds, value propositions, and CTAs - to quickly generate multiple variations [3]. For instance, with 5 hooks, 4 backgrounds, and 3 CTAs, you can create 60 unique combinations without starting from scratch [3].

Follow a three-phase workflow to stay organized:

Concept Phase: Test entirely different creative angles.

Element Phase: Optimize individual components, such as hooks or headlines.

Scaling Phase: Combine the best-performing elements into finalized ads.

This method ensures your testing is focused, helping you avoid wasting time and money on random ideas.

When making variants, change only one element at a time - such as the hook, headline, or CTA. This approach makes it easier to pinpoint what’s driving results, like whether a Thumbstop Ratio above 25% is due to a specific hook [15].

To keep everything organized, use a standardized naming system. For example, a name like 03-30-2026_UGC_ProblemHook_Casual_RedCTA_v2 can quickly tell you the date, concept, and key elements of the ad. Pair this with a testing matrix - a spreadsheet mapping out variables like format, hook style, and value proposition. This ensures every variant has a clear purpose [3].

Here’s a practical example:

In 2026, NovaGear, a consumer tech brand, used Koro's "URL-to-Video" AI tool to create video ads for 50 SKUs simultaneously. By pulling data from product pages and using AI avatars instead of physical creators, they produced 50 videos in just 48 hours. This approach saved them about $2,000 in logistics and shipping costs [15].

This shows how modular production and AI tools can speed up workflows and reduce costs.

Keep Brand Consistency Across Variants

While experimenting, ensure all variants stay true to your brand identity. Stick to a central theme - like "outdoor lifestyle" or "productivity tools" - and maintain consistency in fonts, colors, and tone. For example, if your brand uses a specific shade of blue and a clean sans-serif font, keep those elements intact even when testing different hooks or CTAs. Using core templates for always-on campaigns can also provide a consistent foundation while allowing room for creative experimentation.

Document your variations to make comparisons easier and more precise. This way, you can measure the impact of changes without confusing your audience.

Compare Variants with a Table

Tracking each tested element and its expected impact can simplify decision-making. A detailed table like the one below helps you analyze performance and move successful creatives into high-budget campaigns.

Element Type | Variations Tested | Expected Impact on Metrics |

|---|---|---|

Hook (First 3s) | Problem-focused vs. Benefit-driven vs. Curiosity | |

Messaging/Body | Feature-focused vs. Emotional appeal vs. Testimonial | |

Visual Style | UGC-style vs. High-production vs. Static Image | |

Call to Action | "Download Now" vs. "Get Started" vs. "Limited Offer" |

This table can guide your priorities. For instance, if your goal is brand awareness, focus on testing hooks first. If conversions are your main objective, target CTAs and messaging instead. To avoid ad fatigue, top-performing brands refresh their creative assets every 10 days [8]. If your budget is between $10,000 and $50,000 per month, aim to test 20–30 new concepts monthly [13].

Step 3: Launch Testing Campaigns with Ad Set Budget Optimization

When testing creative variants, it's crucial to ensure fair and unbiased evaluation. One effective method is using Ad Set Budget Optimization (ABO), which allows you to control spending for each variant equally. This approach avoids the algorithm's tendency to favor certain ads prematurely, ensuring you collect accurate performance data.

Set Up Campaigns for Testing

Begin by setting up a dedicated testing campaign, separate from your main scaling campaigns. This keeps your primary budget safe while providing untested creatives the exposure they need. Allocate about 5% to 10% of your total monthly ad spend for this testing. For instance, if your monthly budget is $50,000, set aside $2,500 to $5,000 for this purpose [19].

In your testing campaign, maintain consistent settings across all ad sets. Use identical targeting criteria - such as audience, objectives, placement options, and attribution windows - so that the only variable being tested is the creative itself [16][19]. For broader reach, stick with general targeting parameters like country, age, and gender. This ensures the creative has the opportunity to find its audience organically, making it easier to scale successful variants later [5][7].

Follow the "One Creative, One Ad Set" rule by assigning each creative variant to its own ad set [18][9]. This prevents the algorithm from prematurely favoring one ad over others based on early, unreliable signals. To gather meaningful data, set each ad set’s daily budget to two to three times your target CPA. This ensures the algorithm collects enough data - ideally 2 to 3 conversions per day - to move past random fluctuations and provide actionable insights [16][19].

Use ABO for Accurate Data

Once your campaign structure is ready, you can fine-tune budget allocation using ABO. At the campaign level, disable the "Advantage+ campaign budget" option to manually control spending for each ad set [16]. While Campaign Budget Optimization (CBO) can be helpful for scaling proven ads, it tends to disproportionately allocate budget to ads that perform well early on, often at the expense of other creatives that haven't had enough time to prove their worth [17][19].

"CBO campaigns starve new creatives by optimizing for cheap, early clicks... The algorithm grabbed onto that early signal and said, 'This is the one!'" - TryCrush [18]

ABO, on the other hand, ensures that each creative gets an equal chance. Allocate enough budget for each ad set to reach at least $50 to $100 in spend or achieve 1,000 to 2,000 impressions before making any decisions [3][17]. Run your tests for 3–5 days to account for auction fluctuations and attribution delays. Keep in mind that optimization typically stabilizes after an ad set achieves 50 optimization events within a 7-day period [16][19][17].

To streamline testing at scale, consider tools like Bigeye's media buying platform. Features like Bigeye-CLI allow teams to manage large testing environments efficiently, ensuring accurate data collection and analysis. By using such tools, creative testing becomes a precise and systematic process for identifying the ads that will drive your next growth phase [20].

Step 4: Analyze Results and Improve Performance

Now that you've established goals and testing frameworks, it's time to turn your data into actionable improvements. This is where data-driven strategies outshine guesswork.

Run Tests Until You Have Enough Data

Patience is key when analyzing results. Tests should run for at least 5–7 days to account for daily behavior fluctuations[5][7]. Avoid acting on early results from the first 24 hours, as they can be misleading and costly.

Your primary objective is reaching statistical significance, often defined as a 95% confidence level[7][22]. Practically, this means ensuring each creative variant garners at least 500 impressions or runs for 7–10 days of consistent delivery[5][22][7]. For conversions, aim for at least 100 per variant before declaring a winner[8]. However, limiting tests to 14 days or less is wise to avoid external factors, like seasonal changes, that could skew your results[5][7].

Platforms like Bigeye's EyeSight analytics simplify this process by consolidating data from Meta, TikTok, and Google into one dashboard. This lets you monitor key metrics like ROAS, CAC, LTV, and revenue attribution in real time[23].

Once you've collected enough data, the focus shifts to identifying performance patterns and refining your approach.

Find Patterns in Your Data

When analyzing results, follow a structured approach to pinpoint where performance is breaking down. Start with spend distribution - if the algorithm isn't allocating budget to a creative, it's likely signaling low confidence in its ability to convert[5]. Next, check the hook rate (viewers watching the first 3 seconds). A rate of 25% or higher is ideal[5]. If it's below that, your opening visuals or messaging may need a stronger impact.

Then, evaluate the hold rate, which measures how many viewers watch at least 50% of your video. A good benchmark is 10% or higher[5]. Low hold rates suggest your content loses interest after the hook, so consider adjusting pacing or adding captions. Move on to the CTR (click-through rate), where a 1%+ rate is a solid indicator of audience interest[5]. Finally, assess CPA and ROAS to gauge overall efficiency[5][21]. (Refer back to Step 1 for detailed metric benchmarks.)

Don’t stop at full ad performance - break your creatives into individual components like hooks, visuals, messaging, and CTAs. This way, you can identify which specific element drives results[21][22]. For instance, if three video ads share the same body content but feature different hooks, you can isolate the most effective hook. This granular analysis often reveals the most actionable insights.

Bigeye's EyeQ research platform takes this further by validating creative concepts and messaging before you spend heavily on ads[23]. Using AI-powered tools and human input, EyeQ provides insights within 2–3 weeks. As Marcus R., CMO of a beauty brand, shared:

"EyeQ research pays for itself ten times over. We used to spend months debating positioning internally. Now we test it with real consumers in weeks and make decisions based on data, not the loudest voice in the room." [23]

Metric | What It Indicates | Improvement Strategy |

|---|---|---|

Hook Rate | Effectiveness of the first 3 seconds | Adjust visuals or opening lines for stronger engagement [7][9] |

Hold Rate | Engagement with main content | Improve pacing, add captions, or shorten video length [5][7] |

CTR | Audience interest and offer relevance | |

CPA / ROAS | Overall ad efficiency |

Armed with these insights, you can now refine your creative strategies.

Refine Based on Data

Refining your creative elements requires a disciplined approach. Focus on isolating changes to ensure performance improvements are directly tied to those adjustments. For example, if a specific hook was present in 80% of your top-performing ads, prioritize that style in future tests[21]. If your CTR is high but CPA is also high, your creative may attract clicks, but the post-click experience might need work[9][1].

Always test one variable at a time to maintain clarity about what drives results[7][22]. This methodical approach builds momentum over time. As noted in a Harvard Business Review study:

"Organizations that embrace experimentation as part of their culture see stronger returns because data-backed decisions compound over time"[22].

Keep an eye on creative fatigue. Most ads lose effectiveness within 2–4 weeks, so identify winners early and introduce fresh variations before performance declines[22]. Monitor frequency metrics - if a creative averages 2.5–3.0 impressions per user, it’s likely time for a refresh[8]. Top-performing brands typically update their creative assets every 10 days to stay ahead[8].

Finally, use a control creative - a proven ad that serves as a baseline for comparison[21][1]. With tools like EyeSight managing data from over 100 platforms, including Meta, Google, Amazon, and retail networks like Walmart and Target, you can refine your strategy with clarity and precision[23].

Step 5: Scale Winning Creative and Set a Testing Schedule

Once you've identified the creatives that perform best through careful testing, the next step is to scale them effectively and establish a regular testing routine. This is what separates stagnant campaigns from those that continue to grow.

Scale Winning Creative to High-Budget Campaigns

After validating your creatives, transition them from testing campaigns to scaling campaigns to reduce risk. The rule of thumb here is clear: once a creative surpasses 1,000 impressions and meets performance benchmarks, it’s ready to scale [3].

Here’s how to allocate your budget effectively: dedicate 70–80% to proven creatives while keeping 20–30% for testing new ones [3]. This balance ensures steady growth while leaving room for innovation. Move your top-performing creatives into Campaign Budget Optimization (CBO) or Advantage+ Shopping Campaigns, where algorithms automatically prioritize the best performers [15].

To scale horizontally, experiment with variations of your winning concepts. This could mean tweaking hooks, using different creators, or changing backgrounds to see if the success holds across different versions [11]. As AdStellar advises:

"The golden rule: separate your testing campaigns from your scaling campaigns. Your testing campaigns exist to validate creative concepts with minimal risk" [3].

Once your winners are scaled, keep the momentum going by implementing a structured creative testing schedule.

Build a Creative Testing Calendar

Consistency is key. A regular testing schedule ensures you’re always ahead of creative fatigue. Aim to launch new creative tests twice a week, such as every Monday and Friday [5].

The number of variations you test depends on your ad spend:

$10,000–$50,000/month: Test 20–30 new variations weekly.

$50,000–$200,000/month: Aim for 50–100 variations weekly.

$200,000+/month: Test 100–200+ new creatives weekly [5].

Stick to the 70/30 rule - spend 70% on refining successful creatives and 30% on exploring new ideas [2]. Keep an eye on performance metrics to spot signs of fatigue. For example, if your click-through rate drops by 20% or your CPA rises by 30% compared to the baseline, it’s time to refresh your campaigns with new variations [3].

To stay organized, tools like Bigeye's EyeSight analytics can help you track performance across platforms like Meta, TikTok, Google, and more than 100 others. This makes it easier to identify trends and stick to your testing calendar.

Step 6: Automate and Simplify the Testing Process

Once you've established a solid testing and scaling process, the next logical step is automation. Why? Because manual testing just doesn’t cut it when you’re managing dozens - or even hundreds - of creative variations across multiple platforms. Trying to juggle spreadsheets and scattered dashboards slows everything down. At scale, automation isn’t just helpful - it’s essential. It’s the difference between teams that keep up and those that get bogged down by fragmented data.

The real hurdle? Staying consistent while keeping up the pace. Tools like Bigeye's EyeSight dashboards make this easier by consolidating data from platforms like Meta, Google, TikTok, Amazon, and YouTube into a single, unified view. No more hopping between platforms to track metrics. Instead, you get real-time insights into ROAS, CPA, and LTV, all in one place. This unified approach eliminates misleading data issues, such as when a creative seems to underperform simply because of a data pipeline glitch rather than actual content issues [24]. With everything in one view, you’re set up for smoother testing and optimization.

Automation doesn’t just stop at data - it also transforms production. What used to take days can now be done in minutes with AI-driven tools. For example, AI tools can create video ads in a fraction of the time, slashing both production timelines and costs [15].

To make automation work for you, it’s crucial to establish clear rules. For instance, set thresholds like pausing any creative with a CPA over $75 after spending $100. This way, decisions are made automatically, without the need for constant manual oversight [3]. AI-powered monitoring can also catch early signs of performance dips, such as a 20% drop in click-through rates, and trigger updates to your creatives before you waste more budget [3][21].

Bigeye’s configuration tools further streamline operations, especially when managing multiple campaigns or clients. Their Bigeye-CLI allows you to handle workspaces at scale, refresh metrics, and run comparisons across environments - all without tedious manual setup [20]. As Tyler Jones, Senior Staff Engineer at Bigeye, explains:

"Inaccessible design is an operational liability. When your data team can't use an observability tool effectively because of poor contrast or confusing navigation, incidents take longer to resolve" [24].

Conclusion

Building a scalable approach to creative testing isn’t about cranking out more ads and hoping for the best. It’s about creating a structured process that uses data to guide decisions. By now, it’s clear that setting clear goals, organizing creative variations, isolating testing environments, and automating workflows can significantly improve marketing outcomes. Here’s the reality: creative quality accounts for up to 65% of sales lift in digital advertising[21]. The brands that thrive are those that test, learn, and adapt faster than their competitors.

This shift toward creative-first optimization means outdated tools like spreadsheets and disconnected dashboards just won’t cut it anymore. Managing dozens of ad variations across platforms like Meta, Google, TikTok, and Amazon requires unified visibility. That’s where Bigeye’s EyeSight comes in - offering a single, real-time dashboard to help identify top performers and cut underperforming ads quickly, saving both time and budget.

Here’s the framework to keep in mind: dedicate 10% to 20% of your ad budget to testing[15], set clear criteria for stopping underperformers before launching, and introduce new creative variations weekly. For high-spending accounts, this could mean testing 100–200+ variations per week to stay ahead of creative fatigue[5]. The real secret? It’s not about sheer volume - it’s about testing with purpose and speed.

As Angad Singh from Segwise aptly puts it, "The organizations that win the performance marketing game in the coming year will be the ones that can produce 10 new, data-backed creative iterations in the time it takes their competitors to launch one unproven concept."[12] With the right tools, a solid framework, and consistent discipline, you’re not just running ad tests - you’re building a system for ongoing growth and improvement.

FAQs

How do I pick the right test budget if my spend is small?

If your advertising budget is limited, set aside a small amount for testing. For example, allocate $20–$50 to test overall concepts and $30–$75 for testing specific elements. Prioritize quick testing cycles and frequent iterations to pinpoint the creatives that perform best. Using a phased strategy - beginning with broader concept tests and then focusing on individual elements - can help you gather useful insights without overspending.

When is a creative “statistically significant” enough to call a winner?

When a creative is considered "statistically significant", it means its performance metrics - like click-through rates or conversions - show a clear and reliable difference that goes beyond the margin of error. In simpler terms, the results are unlikely to be random and can be trusted to determine a clear winner.

How do I prevent creative fatigue while scaling winners?

To keep your campaigns fresh and effective, watch for early warning signs of creative fatigue, such as drops in engagement or conversion rates. Regularly update your creatives by tweaking visuals, messaging, or formats to keep your audience interested. Using a high-velocity testing approach can make a big difference - systematically test and refresh your creatives to pinpoint what works best. This helps you avoid overexposure and keeps your campaigns performing well as they grow.

Food & Beverage

May 12, 2026